Restoring the Commons | John Michael Greer

Jan. 23, 2013 (Archdruid Report) -- The hard work of rebuilding a post-imperial America, as I suggested in last week’s post, is going to require the recovery or reinvention of many of the things this nation chucked into the dumpster with whoops of glee as it took off running in pursuit of its imperial ambitions. The basic skills of democratic process are among the things on that list; so, as I suggested last month, are the even more basic skills of learning and thinking that undergird the practice of democracy.

Jan. 23, 2013 (Archdruid Report) -- The hard work of rebuilding a post-imperial America, as I suggested in last week’s post, is going to require the recovery or reinvention of many of the things this nation chucked into the dumpster with whoops of glee as it took off running in pursuit of its imperial ambitions. The basic skills of democratic process are among the things on that list; so, as I suggested last month, are the even more basic skills of learning and thinking that undergird the practice of democracy.

All that remains crucial. Still, it so happens that a remarkably large number of the other things that will need to be put back in place are all variations of a common theme. What’s more, it’s a straightforward theme -- or, more precisely, would be straightforward if so many people these days weren’t busy trying to pretend that the concept at its center either doesn’t exist or doesn’t present the specific challenges that have made it so problematic in recent years. The concept in question? The mode of collective participation in the use of resources, extending from the most material to the most abstract, that goes most often these days by the name of “the commons.”

The redoubtable green philosopher Garrett Hardin played a central role decades ago in drawing attention to the phenomenon in question with his essay The Tragedy of the Commons. It’s a remarkable work, and it’s been rendered even more remarkable by the range of contortions engaged in by thinkers across the economic and political spectrum in their efforts to evade its conclusions. Those maneuvers have been tolerably successful; I suspect, for example, that many of my readers will recall the flurry of claims a few years back that the late Nobel Prize-winning economist Elinor Ostrom had “disproved” Hardin with her work on the sustainable management of resources.

In point of fact, she did no such thing. Hardin demonstrated in his essay that an unmanaged commons faces the risk of a vicious spiral of mismanagement that ends in the common’s destruction; Ostrom got her Nobel, and deservedly so, by detailed and incisive analysis of the kinds of management that prevent Hardin’s tragedy of the commons from taking place. A little later in this essay, we’ll get to why those kinds of management are exactly what nobody in the mainstream of American public life wants to talk about just now; the first task at hand is to walk through the logic of Hardin’s essay and understand exactly what he was saying and why it matters.

Hardin asks us to imagine a common pasture, of the sort that was common in medieval villages across Europe. The pasture is owned by the village as a whole; each of the villagers has the right to put his cattle out to graze on the pasture. The village as a whole, however, has no claim on the milk the cows produce; that belongs to the villager who owns any given cow. The pasture is a collective resource, from which individuals are allowed to extract private profit; that’s the basic definition of a commons.

In the Middle Ages, such arrangements were common across Europe, and they worked well because they were managed by tradition, custom, and the immense pressure wielded by informal consensus in small and tightly knit communities, backed up where necessary by local manorial courts and a body of customary law that gave short shrift to the pursuit of personal advantage at the expense of others. The commons that Hardin asks us to envision, though, has no such protections in place. Imagine, he says, that one villager buys additional cows and puts them out to graze on the common pasture. Any given pasture can only support so many cows before it suffers damage; to use the jargon of the ecologist, it has a fixed carrying capacity for milk cows, and exceeding the carrying capacity will degrade the resource and lower its future carrying capacity. Assume that the new cows raise the total number of cows past what the pasture can support indefinitely, so once the new cows go onto the pasture, the pasture starts to degrade.

Notice how the benefits and costs sort themselves out. The villager with the additional cows receives all the benefit of the additional milk his new cows provide, and he receives it right away. The costs of his action, by contrast, are shared with everyone else in the village, and their impact is delayed, since it takes time for pasture to degrade. Thus, according to today’s conventional economic theories, the villager is doing the right thing. Since the milk he gets is worth more right now than the fraction of the discounted future cost of the degradation of the pasture he will eventually have to carry, he is pursuing his own economic interest in a rational manner.

The other villagers, faced with this situation, have a choice of their own to make. (We’ll assume, again, that they don’t have the option of forcing the villager with the new cows to get rid of them and return the total herd on the pasture to a level it can support indefinitely.) They can do nothing, in which case they bear the costs of the degradation of the pasture but gain nothing in return, or they can buy more cows of their own, in which case they also get more milk, but the pasture degrades even faster. According to most of today’s economic theories, the latter choice is the right one, since it allows them to maximize their own economic interest in exactly the same way as the first villager. The result of the process, though, is that a pasture that would have kept a certain number of cattle fed indefinitely is turned into a barren area of compacted subsoil that won’t support any cattle at all. The rational pursuit of individual advantage thus results in permanent impoverishment for everybody.

This may seem like common sense. It is common sense, but when Hardin first published “The Tragedy of the Commons” in 1968, it went off like a bomb in the halls of academic economics. Since Adam Smith’s time, one of the most passionately held beliefs of capitalist economics has been the insistence that individuals pursuing their own economic interest without interference from government or anyone else will reliably produce the best outcome for everybody. You’ll still hear defenders of free market economics making that claim, as if nobody but the Communists ever brought it into question. That’s why very few people like to talk about Hardin’s tragedy of the commons these days; it makes it all but impossible to uphold a certain bit of popular, appealing, but dangerous nonsense.

Does this mean that the rational pursuit of individual advantage always produces negative results for everyone? Not at all. The theorists of capitalism can point to equally cogent examples in which Adam Smith’s invisible hand passes out benefits to everyone, and a case could probably be made that this happens more often than the opposite. The fact remains that the opposite does happen, not merely in theory but also in the real world, and that the consequences of the tragedy of the commons can reach far beyond the limits of a single village.

Hardin himself pointed to the destruction of the world’s oceanic fisheries by overharvesting as an example, and it’s a good one. If current trends continue, many of my readers can look forward, over the next couple of decades, to tasting the last seafood they will ever eat. A food resource that could have been managed sustainably for millennia to come is being annihilated in our lifetimes, and the logic behind it is that of the tragedy of the commons: participants in the world’s fishing industries, from giant corporations to individual boat owners and their crews, are pursuing their own economic interests, and exterminating one fishery after another in the process.

Another example? The worldwide habit of treating the atmosphere as an aerial sewer into which wastes can be dumped with impunity. Every one of my readers who burns any fossil fuel, for any purpose, benefits directly from being able to vent the waste CO2 directly into the atmosphere, rather than having to cover the costs of disposing of it in some other way. As a result of this rational pursuit of personal economic interest, there’s a very real chance that most of the world’s coastal cities will have to be abandoned to the rising oceans over the next century or so, imposing trillions of dollars of costs on the global economy.

Plenty of other examples of the same kind could be cited. At this point, though, I’d like to shift focus a bit to a different class of phenomena, and point to the Glass-Steagall Act, a piece of federal legislation that was passed by the U.S. Congress in 1933 and repealed in 1999. The Glass-Steagall Act made it illegal for banks to engage in both consumer banking activities such as taking deposits and making loans, and investment banking activities such as issuing securities; banks had to choose one or the other. The firewall between consumer banking and investment banking was put in place because in its absence, in the years leading up to the 1929 crash, most of the banks in the country had gotten over their heads in dubious financial deals linked to stocks and securities, and the collapse of those schemes played a massive role in bringing the national economy to the brink of total collapse.

By the 1990s, such safeguards seemed unbearably dowdy to a new generation of bankers, and after a great deal of lobbying the provisions of the Glass-Steagall Act were eliminated. Those of my readers who didn’t spend the last decade hiding under a rock know exactly what happened thereafter: banks went right back to the bad habits that got their predecessors into trouble in 1929, profited mightily in the short term, and proceeded to inflict major damage on the global economy when the inevitable crash came in 2008.

That is to say, actions performed by individuals (and those dubious “legal persons” called corporations) in the pursuit of their own private economic advantage garnered profits over the short term for those who engaged in them, but imposed long-term costs on everybody. If this sounds familiar, dear reader, it should. When individuals or corporations profit from their involvement in an activity that imposes costs on society as a whole, that activity functions as a commons, and if that commons is unmanaged the tragedy of the commons is a likely result. The American banking industry before 1933 and after 1999 functioned, and currently functions, as an unmanaged commons; between those years, it was a managed commons. While it was an unmanaged commons, it suffered from exactly the outcome Hardin’s theory predicts; when it was a managed commons, by contrast, a major cause of banking failure was kept at bay, and the banking sector was more often a source of strength than a source of weakness to the national economy.

It’s not hard to name other examples of what I suppose we could call “commons-like phenomena” -- that is, activities in which the pursuit of private profit can impose serious costs on society as a whole -- in contemporary America. One that bears watching these days is food safety. It is to the immediate financial advantage of businesses in the various industries that produce food for human consumption to cut costs as far as possible, even if this occasionally results in unsafe products that cause sickness and death to people who consume them; the benefits in increased profits are immediate and belong entirely to the business, while the costs of increased morbidity and mortality are borne by society as a whole, provided that your legal team is good enough to keep the inevitable lawsuits at bay. Once again, the asymmetry between benefits and costs produces a calculus that brings unwelcome outcomes.

The American political system, in its pre-imperial and early imperial stages, evolved a distinctive response to these challenges. The Declaration of Independence, the wellspring of American political thought, defines the purpose of government as securing the rights to life, liberty, and the pursuit of happiness. There’s more to that often-quoted phrase than meets the eye. In particular, it doesn’t mean that governments are supposed to provide anybody with life, liberty, or happiness; their job is simply to secure for their citizens certain basic rights, which may be inalienable -- that is, they can’t be legally transferred to somebody else, as they could under feudal law -- but are far from absolute. What citizens do with those rights is their own business, at least in theory, so long as their exercise of their rights does not interfere too drastically with the ability of others to do the same thing. The assumption, then and later, was that citizens would use their rights to seek their own advantage, by means as rational or irrational as they chose, while the national community as a whole would cover the costs of securing those rights against anyone and anything that attempted to erase them.

That is to say, the core purpose of government in the American tradition is the maintenance of the national commons. It exists to manage the various commons and commons-like phenomena that are inseparable from life in a civilized society, and thus has the power to impose such limits on people (and corporate pseudopeople) as will prevent their pursuit of personal advantage from leading to a tragedy of the commons in one way or another. Restricting the capacity of banks to gamble with depositors’ money is one such limit; restricting the freedom of manufacturers to sell unsafe food is another, and so on down the list of reasonable regulations. Beyond those necessary limits, government has no call to intervene; how people choose to live their lives, exercise their liberties, and pursue happiness is up to them, so long as it doesn’t put the survival of any part of the national commons at risk.

As far as I know, you won’t find that definition taught in any of the tiny handful of high schools that still offer civics classes to young Americans about to reach voting age. Still, it’s a neat summary of generations of political thought in pre-imperial and early imperial America. These days, by contrast, it’s rare to find this function of government even hinted at. Rather, the function of government in late imperial America is generally seen as a matter of handing out largesse of various kinds to any group organized or influential enough to elbow its way to a place at the feeding trough. Even those people who insist they are against all government entitlement programs can be counted on to scream like banshees if anything threatens those programs from which they themselves benefit; the famous placard reading “Government Hands Off My Medicare” is an embarrassingly good reflection of the attitude that most American pseudoconservatives adopt in practice, however loudly they decry government spending in theory.

A strong case can be made, though, for jettisoning the notion of government as national sugar daddy and returning to the older notion of government as guarantor of the national commons. The central argument in that case is simply that in the wake of empire, the torrents of imperial tribute that made the government largesse of the recent past possible in the first place will go away. As the United States loses the ability to command a quarter of the world’s energy supplies and a third of its natural resources and industrial product, and has to make do with the much smaller share it can expect to produce within its own borders, the feeding trough in Washington DC -- not to mention its junior equivalents in the fifty state capitals, and so on down the pyramid of American government -- is going to run short.

In point of fact, it’s already running short. That’s the usually unmentioned factor behind the intractable gridlock in our national politics: there isn’t enough largesse left to give every one of the pressure groups and veto blocs its accustomed share, and the pressure groups and veto blocs are responding to this unavoidable problem by jamming up the machinery of government with ever more frantic efforts to get whatever they can. That situation can only end in crisis, and probably in a crisis big enough to shatter the existing order of things in Washington DC; after the rubble stops bouncing, the next order of business will be piecing together some less gaudily corrupt way of managing the nation’s affairs.

That process of reconstruction might be furthered substantially if the pre-imperial concept of the role of government were to get a little more air time these days. I’ve spoken at quite some length here and elsewhere about the very limited contribution that grand plans and long discussions can make to an energy future that’s less grim than the one toward which we’re hurtling at the moment, and there’s a fair bit of irony in the fact that I’m about to suggest exactly the opposite conclusion with regard to the political sphere. Still, the circumstances aren’t the same. The time for talking about our energy future was decades ago, when we still had the time and the resources to get new and more sustainable energy and transportation systems in place before conventional petroleum production peaked and sent us skidding down the far side of Hubbert’s peak. That time is long past, the options remaining to us are very narrow, and another round of conversation won’t do anything worthwhile to change the course of events at this point.

That’s much less true of the political situation, because politics are subject to rules very different from the implacable mathematics of petroleum depletion and net energy. At some point in the not too distant future, the political system of the United States of America is going to tip over into explosive crisis, and at that time ideas that are simply talking points today have at least a shot at being enacted into public policy. That’s exactly what happened at the beginning of the three previous cycles of anacyclosis I traced out in a previous post in this series. In 1776, 1860, and 1933, ideas that had been on the political fringes not that many years beforehand redefined the entire political dialogue, and in all three cases this was possible because those once-fringe ideas had been widely circulated and widely discussed, even though most of the people who circulated and discussed them never imagined that they would live to see those ideas put into practice.

There are plenty of ideas about politics and society in circulation on the fringes of today’s American dialogue, to be sure. I’d like to suggest, though, that there’s a point to reviving an older, pre-imperial vision of what government can do, and ought to do, in the America of the future. A political system that envisions its role as holding an open space in which citizens can pursue their own dreams and experiment with their own lives is inherently likely to be better at dissensus than more regimented alternatives, whether those come from the left or the right -- and dissensus, to return to a central theme of this blog, is the best strategy we’ve got as we move into a future where nobody can be sure of having the right answers.

- CreatedTuesday, February 12, 2013

- Last modifiedWednesday, November 06, 2013

World Desk Activities

www.niemanlab.org/2024/04/inside-newsweek-ai-exper…

www.journalismfestival.com/programme/2024/reader-r…

Reader revenue beyond the English language – – International Journalism Festival

In the past few months, many news publishers in the US have announced layoffs. Others have tweaked or abandoned their paywalls and pursued more open models.…

phys.org/news/2024-04-surf-clams-coast-virginia-re…

Surf clams off the coast of Virginia reappear and rebound

The Atlantic surf clam, an economically valuable species that is the main ingredient in clam chowder and fried clam strips, has returned to Virginia waters…

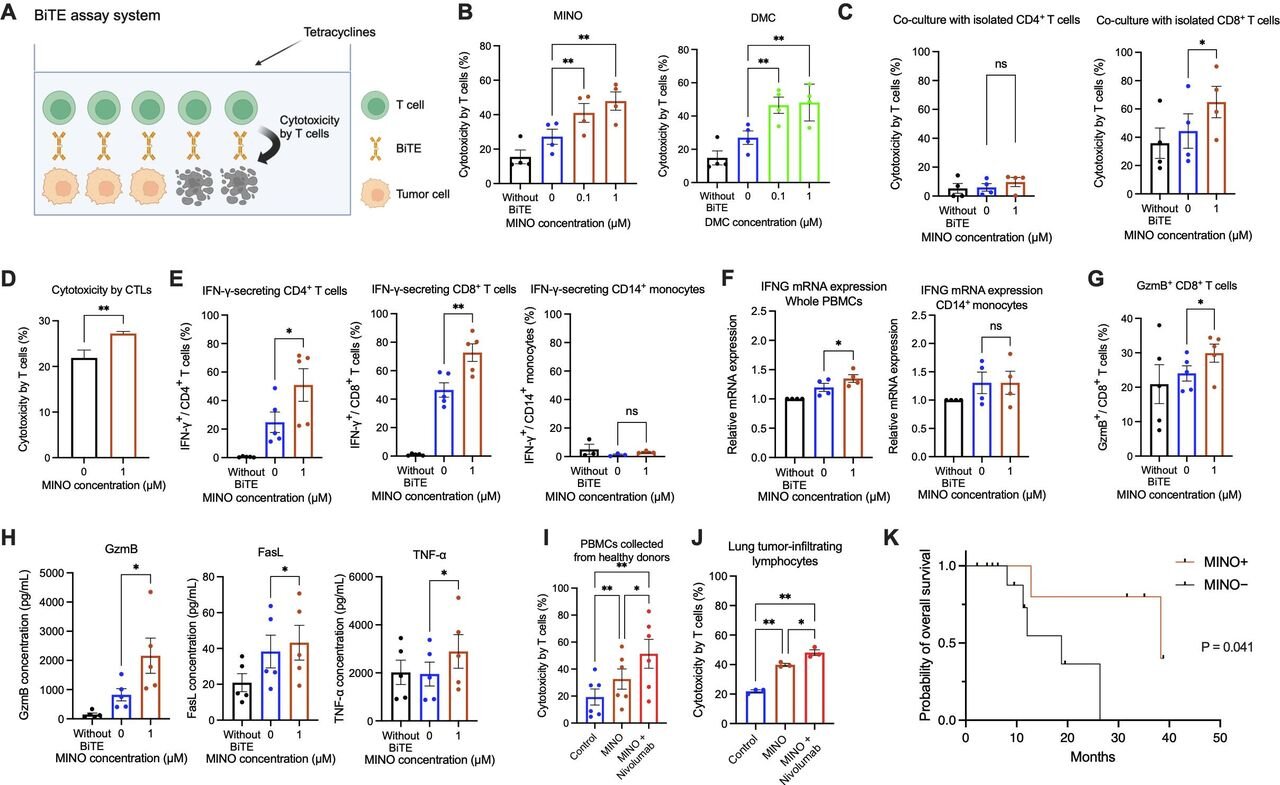

medicalxpress.com/news/2024-04-antibiotics-reveal-…

Antibiotics reveal a new way to fight cancer

Cancer cells grow and spread by hiding from the body's immune system. Immunotherapy allows the immune system to find and attack hidden cancer cells, helping…

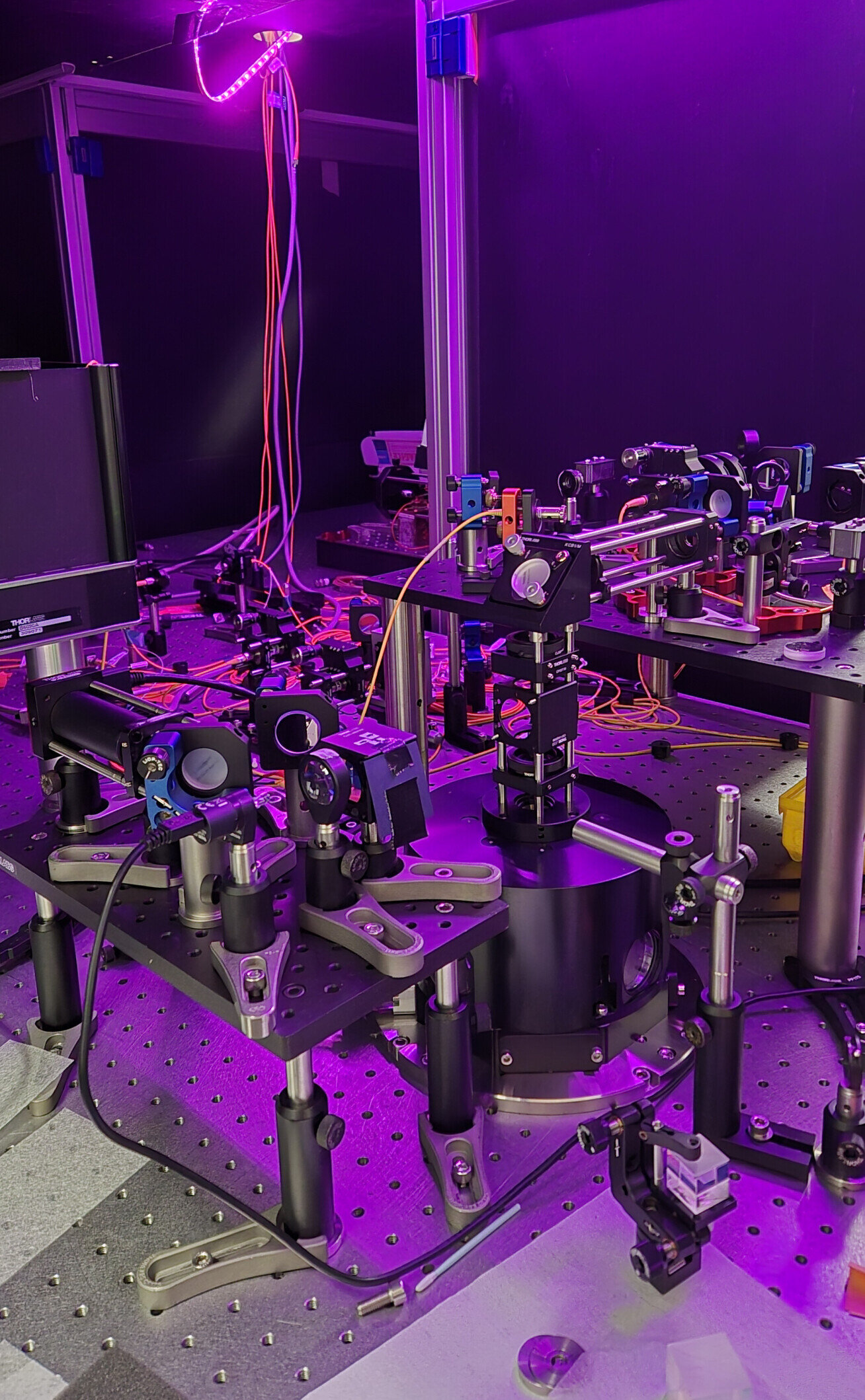

phys.org/news/2024-04-crucial-quantum-internet.htm…

Crucial connection for 'quantum internet' made for the first time

Researchers have produced, stored, and retrieved quantum information for the first time, a critical step in quantum networking.

medicalxpress.com/news/2024-04-women-major-complic…

Women who experience major complications during pregnancy found to have increased risk of early death years later

A team of medical researchers from the University of Texas Health Science Center, in the U.S., and Lund University, in Sweden, has found via study…

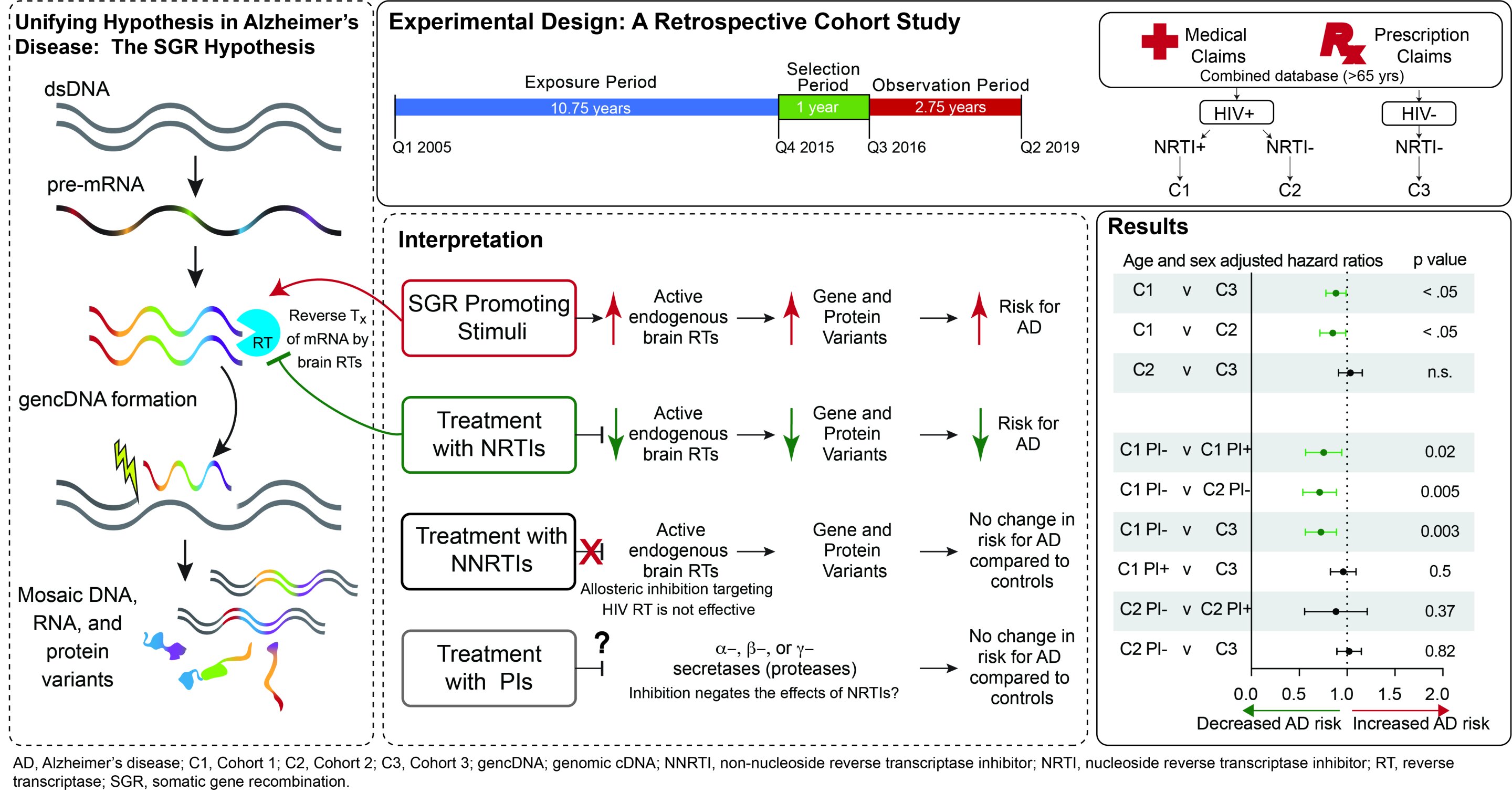

medicalxpress.com/news/2024-04-common-hiv-treatmen…

Common HIV treatments may aid Alzheimer's disease patients

Alzheimer's disease (AD) currently afflicts nearly seven million people in the U.S. With this number expected to grow to nearly 13 million by 2050, the…

medicalxpress.com/news/2024-04-adolescent-stress-p…

Study suggests adolescent stress may raise risk of postpartum depression in adults

In a new study, a Johns Hopkins Medicine-led research team reports that social stress during adolescence in female mice later results in prolonged elevation of…

Latest Stories

Electronic Frontier Foundation

- Speaking Freely: Obioma Okonkwo April 23, 2024

- Screen Printing 101: EFF's Spring Speakeasy at Babylon Burning April 23, 2024

- Podcast Episode: Right to Repair Catches the Car April 23, 2024

- U.S. Senate and Biden Administration Shamefully Renew and Expand FISA Section 702, Ushering in a Two Year Expansion of Unconstitutional Mass Surveillance April 22, 2024

The Intercept

- “Little Home Market”: The Connecticut Company Accused of Fueling an Execution Spree April 25, 2024

- House Responds to Israeli-Iranian Missile Exchange by Taking Rights Away from Americans April 25, 2024

- U.S.-Trained Burkina Faso Military Executed 220 Civilians April 25, 2024

- “Kill All Arabs”: The Feds Are Investigating UMass Amherst for Anti-Palestinian Bias April 24, 2024

VTDigger

- Final Reading: New USDA program aims to help towns access federal disaster relief April 25, 2024

- Vermont’s new fair and impartial policing policy aims to reduce bias based on citizenship April 25, 2024

- Mike Pieciak announces reelection bid for Vermont state treasurer April 25, 2024

- Senate’s version of budget would reduce motel program room capacity by a third April 25, 2024

Mountain Times -- Central Vermont

- Mountain Times -Volume 52, Number 17, April 24-20, 2024 April 24, 2024

- Weekly Horoscope — April 24-30, 2024 April 24, 2024

- Loon vs. Canada goose: A battle for Goose Poop Island April 24, 2024

- A break in the action April 24, 2024